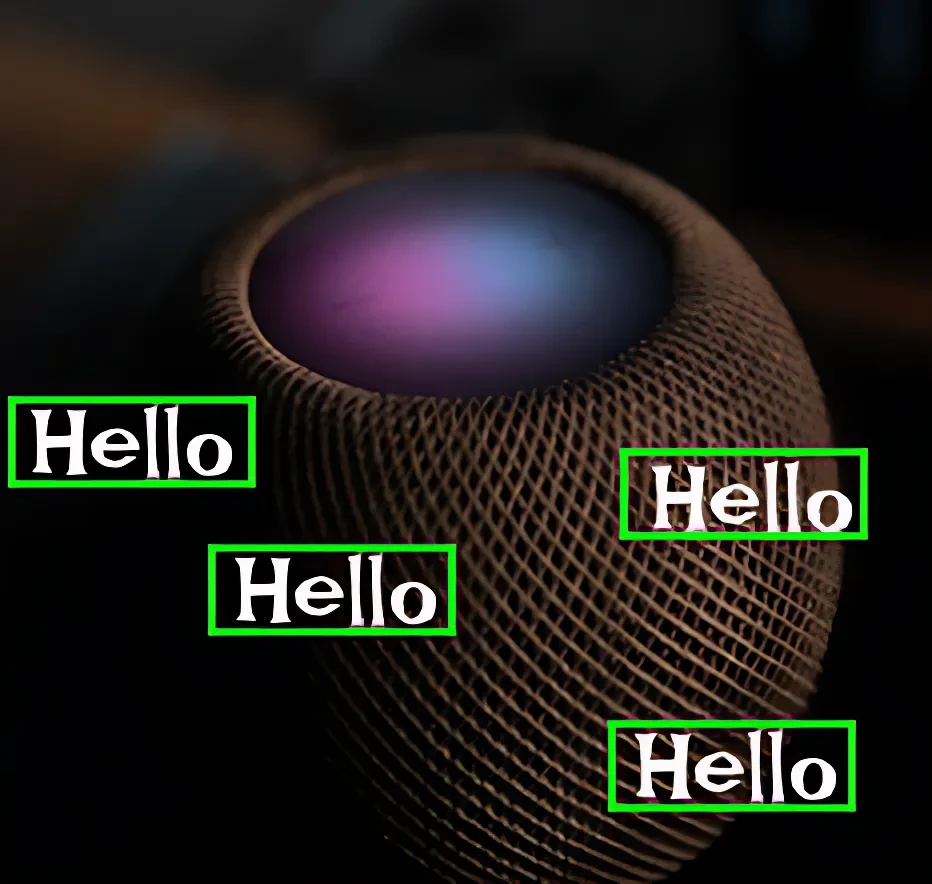

Siri Wake Words in US English

Home » Case Study » Siri Wake Words in US English

Project Overview:

Objective

Siri Wake Words in US English Just like how readers gauge a movie based on its book, when we hear stories, our minds naturally create vivid pictures of characters and scenarios. To get more people using Siri, Apple needed a better dataset for American English. To improve Siri’s voice recognition, Apple collected a huge set of “Hey Siri” recordings from English speakers across the US.

Scope

We made a serious effort to collect an extensive variety of audio clips in US English, with a special focus on different accents and real-life situations. To get the most out of our machine learning tools, we concentrated on how people naturally say the wake word, which helped us build up a pretty solid dataset.

Sources

- Voice Assistant Users: Collaborate with Siri users who consent to contribute audio clips of them saying “Hey Siri” in different contexts.

- Voice Actors: Hire professional voice actors to create synthetic wake word recordings for added diversity and control.

- Public Domain Recordings: Extract publicly available audio recordings with instances of the “Hey Siri” wake word in US English.

Data Collection Metrics

- Total Audio Clips Collected: 100,000

- Total Clips Annotated: 100,000

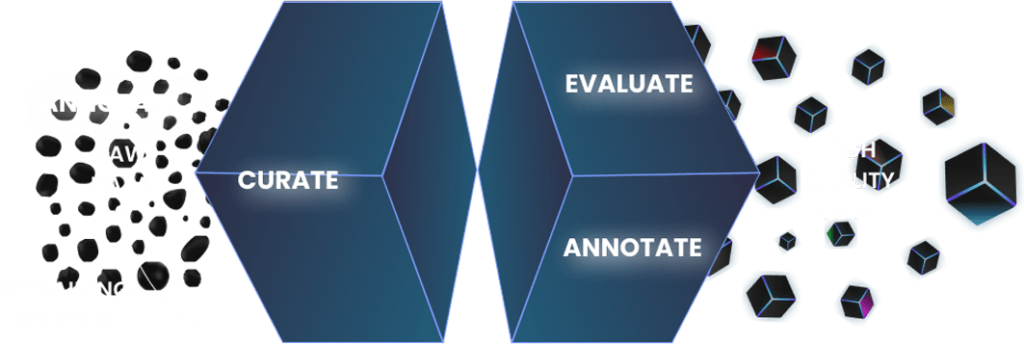

Annotation Process

Stages

Through this thorough process, we nailed down a super trustworthy dataset to train our high-tech voice recognition models.

Annotation Metrics

- Audio Clips with Wake Word Annotations: 50,000

- Speaker Demographic Metadata: 50,000

- Recording Condition Metadata: 50,000

Quality Assurance

Stages

We adhere to stringent quality assurance protocols. Every clip we marked up passed through several checkpoints, getting scrutinized by both smart software and seasoned human pros. We double-checked each clip using both automated tools and expert reviewers to make sure our datasets were as accurate and reliable as possible.

QA Metrics

- Annotation Validation Cases: 5,000 (10% of total)

- Privacy Audits: 30,000 (for user-contributed data)

Conclusion

This project exemplifies our ability to gather and annotate large-scale, diverse datasets, crucial for the development of advanced AI technologies. Our commitment to quality and precision positions us as a trusted partner for AI data needs.

Quality Data Creation

Guaranteed TAT

ISO 9001:2015, ISO/IEC 27001:2013 Certified

HIPAA Compliance

GDPR Compliance

Compliance and Security

Let's Discuss your Data collection Requirement With Us

To get a detailed estimation of requirements please reach us.